AI Agents Are a Toy. Here’s What Actually Scales.

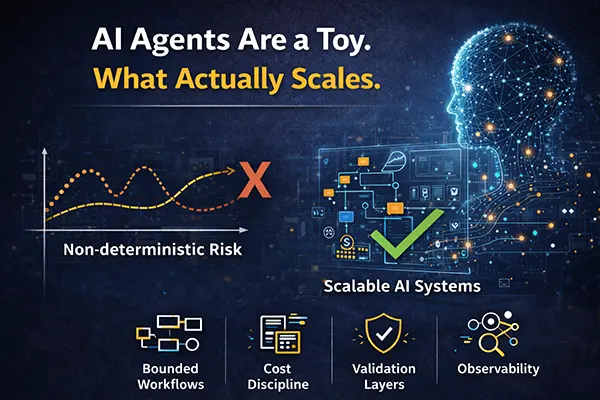

Most AI agents work in demos but collapse under production constraints. Learn why autonomous agent hype hides non deterministic risk and what scalable AI architecture actually requires.

Discover how bounded workflows, cost discipline, validation layers, and observability turn probabilistic models into reliable revenue systems.

If you are already wrestling with this question in your own company, we offer a 2 week CTO Health Check and ongoing Fractional CTO support. You can book a 30-min free call or view the services whenever it is convenient, or simply email us at info@sharplogica.com if you have specific questions.

AI Agents Are a Toy. Here’s What Actually Scales.

The current AI cycle is obsessed with agents:

- Autonomous agents.

- Multi agent systems.

- Self improving agents.

- AI that plans, reasons, executes, and coordinates itself.

The demos are impressive, the production systems are not.

In most companies I review, “AI agents” are not autonomous systems. They are loosely orchestrated chains of prompts wrapped in optimistic language. They work in demos, fail under edge cases, and collapse under cost pressure.

The uncomfortable truth is this: most AI agents are architectural theater.

What actually scales looks very different.

The Problem With Agent Thinking

The idea behind agents is attractive. You give the model a goal, and it decides how to achieve it. It calls tools, plans steps, revises strategy, and eventually produces an outcome.

In theory, that flexibility reduces engineering effort.

In practice, it introduces non deterministic behavior into systems that require determinism.

Production software needs predictability. It needs bounded cost, controlled side effects, and observability.

Agents introduce variability across all four dimensions.

When a system decides its own sequence of actions dynamically, the number of possible execution paths explodes. Testing becomes incomplete, failure modes become difficult to enumerate and cost becomes difficult to forecast.

That is acceptable in a research lab, but very dangerous in revenue critical workflows.

Demos Optimize For Magic. Systems Optimize For Control.

Most agent frameworks are optimized for demonstration value. They prioritize autonomy, creative planning, and minimal developer intervention.

Production systems optimize for something else entirely: control...and more specifically:

- Control over token budgets.

- Control over retries.

- Control over validation.

- Control over tool invocation.

- Control over failure handling.

An agent that “figures things out” sounds powerful. A system that behaves predictably under load is powerful.

When I review AI heavy systems in SaaS products, the scalable ones rarely resemble open ended agents. They resemble structured pipelines with well defined boundaries.

The difference is not philosophical, it is architectural.

What Actually Scales: Bounded Workflows

The systems that scale share a consistent pattern. They treat large language models as components inside a deterministic framework rather than as autonomous decision makers.

Instead of asking an agent to solve an open ended goal, they define:

- A specific input contract.

- A constrained output schema.

- A maximum token budget.

- A fixed retry policy.

- A validation step before downstream execution.

The model is responsible for transformation, not orchestration, which must be explicit.

This design dramatically reduces entropy. It makes failure modes observable, cost predictable and integration testable.

When scale increases, structured systems degrade gracefully. Open ended agents do not.

Cost Is the Hidden Constraint

One of the least discussed aspects of agent architectures is cost behavior.

Agents often iterate internally, calling tools multiple times, reflecting, replanning, and retrying autonomously, with each step consuming tokens and each tool call introducing latency.

In small scale experiments this is acceptable, but at scale it becomes volatile.

If cost per transaction varies widely depending on how many internal loops an agent performs, forecasting margin becomes difficult, and in enterprise SaaS unpredictable cost structures become a valuation risk.

Scalable systems treat tokens like financial units by enforcing budgets, terminating workflows when thresholds are reached, and logging consumption explicitly.

Agents rarely enforce their own discipline; architecture must.

Observability Beats Autonomy

Another recurring issue in agent driven systems is limited observability, because when behavior is emergent rather than predefined, debugging becomes interpretive rather than systematic.

If a workflow fails, engineers often inspect prompt logs and attempt to infer what went wrong, an approach that does not scale.

Scalable AI systems are observable at each stage, with input logged, output validated, tool calls tracked, and failures categorized.

Autonomy without observability creates black boxes, and black boxes do not belong in revenue critical systems.

The Real Blueprint

The systems that scale resemble dataflow pipelines more than autonomous agents.

They break problems into stages where each stage has a single responsibility and produces structured output, allowing downstream components to consume validated data rather than guessing intent.

Retries are explicit, timeouts are enforced, budgets are capped, and state transitions are logged.

This architecture is less magical but far more reliable because it accepts a fundamental reality: large language models are probabilistic components and should not be given control over system level orchestration.

They should instead be treated as bounded transformation engines.

Why the Hype Persists

The reason agent narratives dominate discussion is simple: they are compelling, promising less engineering and more intelligence.

But scalable systems require more engineering, not less, because they depend on thoughtful orchestration, defensive design, explicit state management, and strict budget control.

The fantasy of autonomous intelligence is appealing, while the discipline of deterministic architecture is less glamorous.

Investors and enterprise buyers care about discipline, not magic.

The CTO Responsibility

CTOs face subtle pressure as boards demand AI strategy, marketing wants innovation headlines, and engineering teams want to experiment.

The correct response is not to reject agents entirely but to contain them by experimenting in sandboxes, isolating research from production, and treating autonomy as optional rather than foundational.

When AI becomes part of a revenue workflow, it must sit inside a controlled architectural boundary.

The companies that will win in this cycle are not those with the most autonomous agents, but those with the most disciplined systems.

What Actually Scales

What scales is not autonomy, but structure. It is not open ended reasoning but bounded transformation. It is also not agent magic, but deterministic workflow design with validation, retries, budgets, and observability.

AI agents may be useful exploration tools, but production systems that generate revenue demand something far less romantic and far more powerful: control.

If this mirrors your situation and you want concrete next steps, here is how we can work together:

CTO Health Check (2 weeks). A focused diagnostic of your architecture, delivery, and team. You get a clear view of risks, a 6 to 12 month technical roadmap, and specific, prioritized recommendations.

Fractional CTO services. Ongoing strategic and hands-on leadership. We work directly with your leadership team and engineers to unblock delivery, de-risk key decisions, and align technology with revenue.

30 minute FREE consultation. A short working session to discuss your current situation and see whether our support is the right fit for your company.

To explore these options, you can book a call, view the services, or email us at info@sharplogica.com with any specific questions.

Discussion Board Coming Soon

We're building a discussion board where you can share your thoughts and connect with other readers. Stay tuned!

Ready for CTO-level Leadership Without a Full-time Hire?

Let's discuss how Fractional CTO support can align your technology, roadmap, and team with the business, unblock delivery, and give you a clear path for the next 12 to 18 months.

Or reach us at: info@sharplogica.com